1YouTube as a Research Corpus

YouTube hosts over 800 million videos across every topic, language, and culture. For researchers, this represents an unprecedented corpus of naturally occurring speech, public discourse, educational content, and cultural expression. Unlike survey data or interview transcripts, YouTube content is produced without researcher influence — it represents authentic communication in a natural context.

Research areas that leverage YouTube data include media studies, political communication, linguistics, education, public health messaging, cultural studies, and digital ethnography. In each field, the ability to extract and analyze video content as text has opened new methodological possibilities.

2Building a Research Dataset

The methodology for building a YouTube-based research dataset typically follows these steps:

1. Define your research question and the type of content relevant to it.

2. Identify relevant videos through YouTube search, channel lists, or playlist curation.

3. Document your selection criteria (language, date range, view count, channel type, etc.).

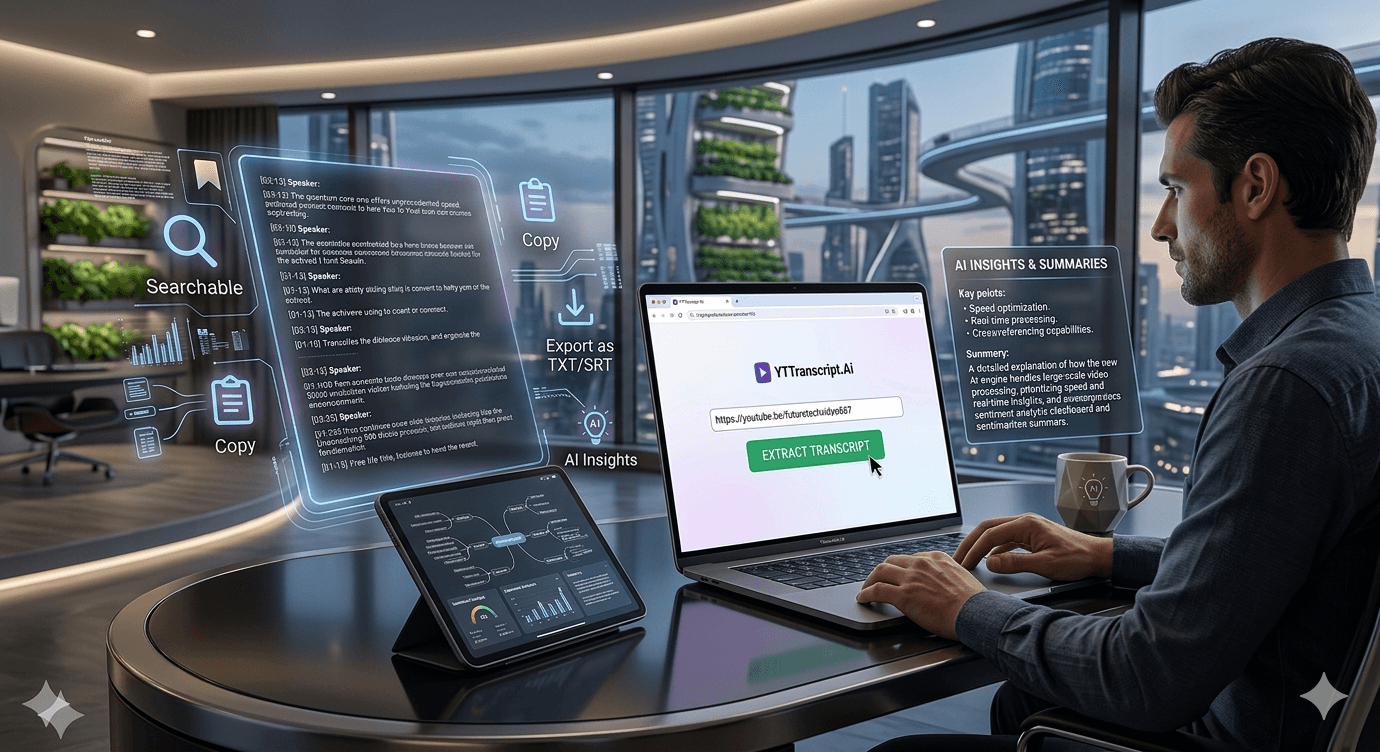

4. Extract transcripts from all selected videos using bulk extraction.

5. Export transcripts in a structured format suitable for your analysis tools.

6. Apply your analytical framework (coding, thematic analysis, sentiment analysis, etc.).

The bulk extraction step is critical. Manually transcribing even 50 videos would take weeks. Automated extraction completes the task in minutes, making large-scale studies feasible for individual researchers and small teams.

3Analytical Approaches

Once you have a corpus of transcripts, several analytical approaches are common:

Thematic analysis: code transcripts for recurring themes, patterns, and narratives. Import into NVivo, Atlas.ti, or MAXQDA for systematic coding.

Content analysis: quantify the frequency of specific topics, terms, or framings across the corpus.

Discourse analysis: examine how language is used to construct meaning, identity, or power relationships.

Sentiment analysis: use AI or NLP tools to assess the emotional tone of content across videos or over time.

Comparative analysis: compare content across channels, time periods, languages, or cultural contexts.

Each approach benefits from having clean, timestamped transcripts that can be systematically analyzed as text data.

4Ethical Considerations

Using YouTube content for research raises important ethical questions. Key considerations:

Public data: YouTube videos are publicly accessible, but researchers should still consider the expectations of creators and subjects. Use anonymization when quoting from personal channels.

Copyright: transcripts reproduce copyrighted content. Fair use generally covers academic research and analysis, but consult your institution's IRB or ethics board.

Representation: YouTube content is not representative of any population. Selection bias, algorithmic curation, and platform demographics all influence what content exists and what is findable.

Reproducibility: videos can be deleted or made private. Archive your dataset and document all video IDs, URLs, and extraction dates for reproducibility.